This question is one of the most popular things our testers hear during their work interviews. Is there the one, correct answer? Most certainly not! In order to explain this problem, let’s approach it the „tester-style” and think backwards.

This question is one of the most popular things our testers hear during their work interviews. Is there the one, correct answer? Most certainly not! So… How is the future „junior” supposed to tackle this obstacle? In order to explain this problem, let’s approach it the „tester-style” and think backwards.

What would happen if there were no test performed? Would it mean that software would stop working Traffic lights would display wrong colours? Lifts would stop moving people to correct floors?

I highly doubt that would be the case for the simple reason that most developers check if their code works. No, they do not test the whole program but check if particular pieces of their code work as expected in reality. Yet the verification is done under specific conditions and for a very limited number of cases.

For the end user it might mean that software works… Until you save a file with a very long name, your PC differs from the developer’s machine substantially, or you attempt to minimise the window. Traffic lights work fine… Until a reset is performed due to power shortage, or a light bulb fails, or someone presses the „light change button” twice. Lifts move up and down fine… Until someone gets stuck in the door, or people want to go in opposite directions.

These cases seem very typical and obvious, yet practice has shown that they can be omitted as soon as the code writing stage. Naturally, this stems from the tendency to focus on the tasks at hand and not consider the bigger picture, which practice is the exact idea behind testing – it is checking the basic and seemingly obvious paths others often disregard, first.

Secondly, it is predicting the unlikely events which probably should never happen but still are a possibility. They could potentially result in errors in the application and lead to user’s annoyance, financial loss, or even death depending on the purpose of the program.

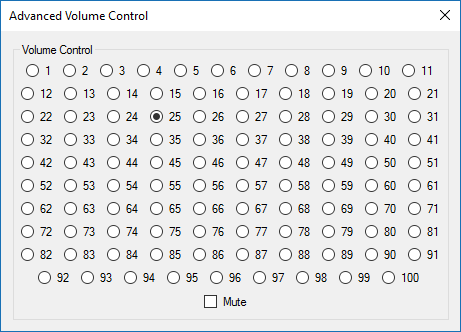

Also, it is not uncommon that testers are expected to do more than simply look for defects and see if the application works or not. Let’s assume a function of volume change in the range of 1 – 100 with the „mute” option was developed. And it was developed as such:

The problem is, it works perfectly fine. Therefore, should the tester accept the solution? Of course not! It is also a tester’s responsibility to use their experience and expertise to evaluate the quality of the tested solution, even if the solution works – in this case it should never see the light of day as it is impractical, not user friendly, and simply ugly… Testing serves the purpose of filtering out all those questionable ideas and asking if “that is really what we want to release”.

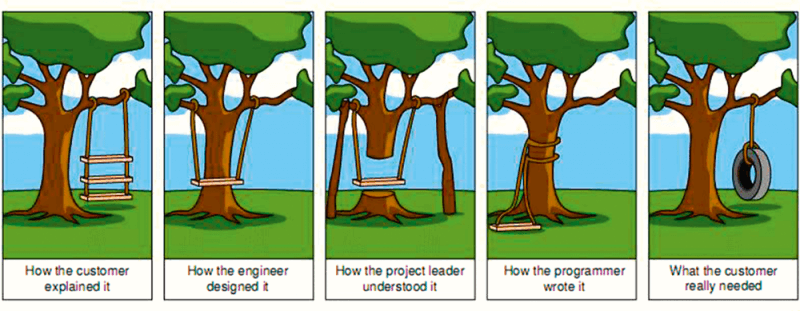

The problem is nicely illustrated by the hugely popular comic strip with a swing:

It shows how ridiculous a design can be if we allow lack of communication, bad information flow, and no validation. We fall prey to our own biases and misconceptions. We need someone to look at our work in a different way, give us their independent opinion, and pinpoint the things we did not see. The sooner such a person is introduced the better – any potential design errors, false assumptions, and wrong interpretations should be eliminated right at the beginning. That is why testers should be a part of the whole Application Life-cycle – from the first lines of code conceived up to the release stage of the finished program.

Finally, we test because we are told to do so… It is always the case when software involves human safety, is regulated by the government, or needs to be certified. In Solwit we work on projects connected to railroad, medical, and automotive industries which include very detailed sets of testing requirements and procedures laid out by particular ISO standards.

To sum up, it could be said that testing is necessary for various reasons: we want to find potential defects regarding all use cases, assure the best user experience for the clients, prevent any bias and misconception within the project, and comply with the outside regulations required by the particular assignment.

These are the most important testing goals, whose completion can be measured with an amount of Quality Assurance artefacts produced in the process. Taking them all into consideration we can come up with the most general, and possibly the most important, idea of why we test – we provide the project leaders and owners with metrics concerning the quality of the product and its development state.

In Solwit we, testers, are often asked by clients, especially at the release stage of a given tool, if the version is production-ready. The question appears in spite of all the statistical data available for the build, as it is often a tester’s opinion that ultimately is the most trusted metric. It is the tester who knows the tool inside out, who spends hundreds of hours executing every function, changing every option, pressing every button, clicking everything that should and should not be clicked, and finally, deciding if the tool is technically ready to be handed to its future users. Yes, in my opinion this is why we test software.